Chapter 5 Spatial interference

Details much smaller than ourselves, like the fibers of a sheet of paper, or the individual ink blotches laid down by a printer, are inaccessible to the eye. Visual stimuli that are very close together are experienced as a single unit.

Even when two objects are spaced far apart enough that we perceive them as two objects rather than one, they are not processed entirely separately by the brain. Receptive fields grow larger as signals ascend the visual hierarchy, and this can degrade the representation of objects that are near each other. Such spatial interference is evident in Figure 5.

Figure 5: When one gazes at the central dot, the central letter to the left is not crowded, but the central letter to the right is.

When gazing at the Figure’s central dot, you likely can perceive the middle letter to the left fairly easily as a ‘J’. However, if while still keeping your eyes fixed on the central dot, you instead try to perceive the central letter to the right, the task is much more difficult. This spatial interference phenomenon is called “crowding” in the perception literature (Wolford 1975; Korte 1923; Strasburger 2014).

Most crowding studies ask participants to identify a letter or other stationary target when flanking stimuli are placed at various separations from the target. How separated the flankers must be to avoid impairment of target identification varies somewhat with the spatial arrangement, but is on average about half the eccentricity of the target, with little to no impairment for greater separations (Bouma 1970; Gurnsey, Roddy, and Chanab 2011). Setting the targets and distractors in motion has little effect (Bex, Dakin, and Simmers 2003), suggesting that these results generalize to tracking - indeed, close flankers not only prevent identification of the target, they can also prevent the target from being individually selected by attention, including for MOT (Intriligator and Cavanagh 2001).

Crowding happens frequently in typical MOT displays — in most published experiments, objects are not prevented from entering the targets’ crowding zones (which as mentioned above, extend to about half the stimulus’ eccentricity). It is not surprising, then, that in typical MOT displays, greater proximity of targets and distractors is associated with poor performance (W. M. Shim, Alvarez, and Jiang 2008; M. Tombu and Seiffert 2008).

5.1 Spatial interference does not explain why tracking many targets is more difficult than tracking only a few

In 2008, Steven Franconeri and colleagues suggested that spatial interference is the only reason why performance is worse when more targets are to be tracked (S. L. Franconeri et al. 2008). In the previous chapter, we introduced the idea of a mental resource that, divided among more targets, results in worse tracking performance. Franconeri suggested that for tracking at least, the only thing that becomes depleted with more targets is the area of the visual field not undergoing inhibition; inhibition stemming from a inhibitory surround around each tracked target (S. L. Franconeri et al. 2008; Steven L. Franconeri, Alvarez, and Cavanagh 2013b; S. L. Franconeri, Jonathan, and Scimeca 2010). In other words, overlap of the inhibitory surrounds of nearby targets is the only reason for worse performance with more targets, and “there is no limit on the number of trackers, and no limit per se on tracking capacity”; “barring object-spacing constraints, people could reliably track an unlimited number of objects as fast as they could track a single object”. Joining Franconeri in making this claim was Zenon Pylyshyn himself as well as other visual cognition researchers including James Enns, George Alvarez, and Patrick Cavanagh, my PhD advisor (S. L. Franconeri, Jonathan, and Scimeca (2010), p.920).

S. L. Franconeri, Jonathan, and Scimeca (2010) tested their theory by keeping object trajectories nearly constant in their experiments but varying the total distance traveled by the objects (by varying both speed and trial length), on the basis that if close encounters were the only cause of errors, they should be proportional to the total distance traveled. As the theory predicted, performance did decrease with distance traveled, with little to no effect of the different object speeds and trial durations that they used. S. L. Franconeri, Jonathan, and Scimeca (2010) took this as strong support for the theory that only spatial proximity mattered. However, note that they had varied the potential for spatial interference rather indirectly, by varying the total distance traveled by the objects, rather than simply spacing the objects further apart.

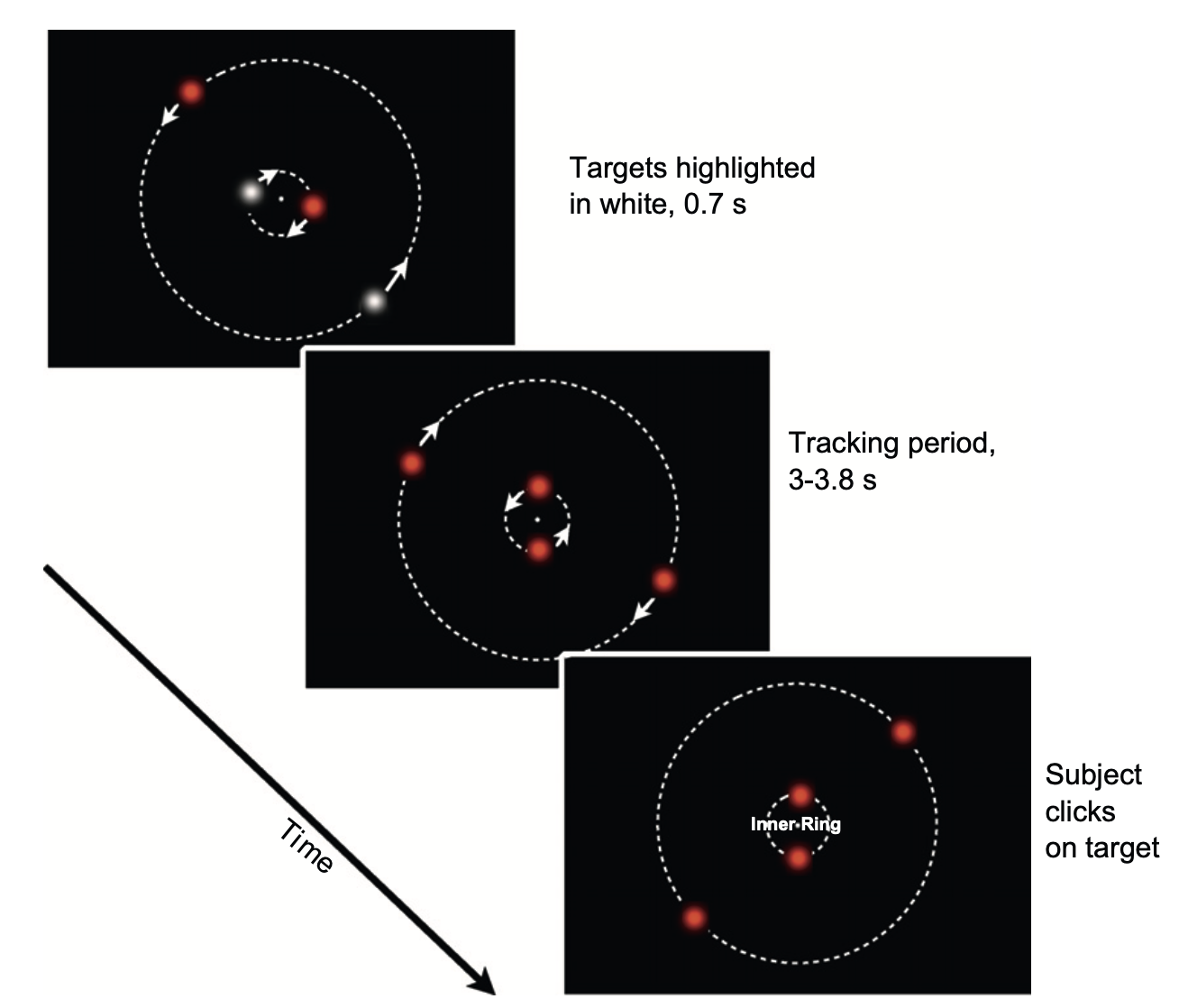

As a more direct test, in 2012 my student Wei-Ying Chen and I used displays in which we could keep the objects widely separated. In one experiment, we created a wide-field display with an ordinary computer screen by having participants bring their noses quite close to it. This allowed us to keep targets and distractors dozens of degrees of visual angle from each other (Alex O. Holcombe and Chen 2012). The basic display configuration is shown in Figure 6.

Figure 6: In experiments by Holcombe and Chen (2012), after the targets were highlighted in white, all the discs became red and revolved about the fixation point. During this interval, each pair of discs occasionally reversed their direction. After 3–3.8 s, the discs stop, one ring is indicated, and the participant clicks on one disc of that ring.

Even when all the objects in the display were extremely widely-spaced, speed thresholds declined dramatically with the number of targets. To us, this appeared to falsify the theory of S. L. Franconeri, Jonathan, and Scimeca (2010), that spatial interference was the only factor that prevented people from tracking many targets. In a 2011 poster presentation, entitled “The resource theory of tracking is right! - at high speeds one may only be able to track a single target (even if no crowding occurs)”, we suggested that each target, regardless of its distance from other objects, uses up some of a limited processing capacity - a resource that was attentional in that it could be applied anywhere in the visual field, or at least anywhere within a hemifield (9). The amount of this resource that is applied to a target determines the fastest speed at which a target can be tracked.

Franconeri et al. did not say why they were unconvinced by the findings of Wei-Ying Chen and I, but they took their spatial interference idea much further, suggesting that it could explain the apparent capacity limits on not just tracking, but also on object recognition, visual working memory, and motor control, writing that in each case capacity limits arise only because “items interact destructively when they are close enough for their activity profiles to overlap” (p.2) (Steven L. Franconeri, Alvarez, and Cavanagh 2013b).

To explain the Alex O. Holcombe and Chen (2012) results, spatial interference would have to extend over a very long distance, farther than anything that had been reported in behavioral studies. If there were such long-range spatial gradients of interference present, it seemed to me that they should have shown up in the results of Alex O. Holcombe and Chen (2012) as worse performance for the intermediate spatial separations tested than for the largest separations we tested. I made this point in Alex O. Holcombe (2019), and in reply, Steven L. Franconeri, Alvarez, and Cavanagh (2013a) pointed to a neurophysiological recording in the lateral intraparietal area (LIP) of rhesus macaque monkeys by Falkner, Krishna, and Goldberg (2010), who cued monkeys to execute a saccade to a visual stimulus. In some trials a second stimulus was flashed 50 ms prior to the saccade execution cue. That second stimulus was positioned in the receptive field of an LIP cell the researchers were recording from, allowing researchers to show that the LIP cell’s response was suppressed relative to trials that did not include a saccade target. This suppression occurred even when the saccade target was very distant - a statistically significant impairment was found for separations as large as 40 deg for some cells.

The data of Falkner, Krishna, and Goldberg (2010) were consistent with the spatial gradient of this interference being quite shallow, allowing Steven L. Franconeri, Alvarez, and Cavanagh (2013a) to write that “levels of surround suppression are strong at both distances, and thus no difference in performance is expected” for the separations tested by Holcombe and Chen (2012). One property of the neural suppression documented by Falkner, Krishna, and Goldberg (2010) strongly suggests, however, that it is not one of the processes that limit our ability to track multiple objects. Specifically, Falkner, Krishna, and Goldberg (2010) found that nearly as often as not, the location in the visual field that yielded the most suppression was not in the same hemifield as the receptive field center. But as we will see in 9, the cost of additional targets for MOT is largely independent in the two hemifields. Evidently, then, the suppression observed in LIP is not what causes worse MOT performance when there are more targets. Instead, as Falkner, Krishna, and Goldberg (2010) themselves concluded, these LIP cells may mediate a global (not hemifield-specific) salience computation for prioritizing saccade or attentional targets.

Having failed to find behavioral evidence for long-range spatial interference, my lab decided to focus on the form of spatial interference that we were confident actually existed: short-range interference. Previous studies of tracking did not provide much evidence about how far that interference extended - either they did not control for eccentricity (e.g., Feria (2013)) or they only tested a few separations (e.g., Michael Tombu and Seiffert (2011)).

In experiments published in 2014, we assessed tracking performance for two targets using spatial separations that ranged from within the interference distance documented in crowding studies through to very large separations. Our experiments validated that interference was confined to a short range (Alex O. Holcombe, Chen, and Howe 2014). Specifically, performance improved with separation, but only up to a distance of about half the target’s eccentricity, as is also found for crowding (Strasburger 2014). In a few experiments there was a trend for better performance as separation increased further, beyond the crowding zone, but this effect was small and not statistically significant. These findings were consistent with our supposition from our previous studies: spatial interference is largely confined to the crowding range. When objects are widely spaced, then, the deficit associated with tracking more targets is caused by a limited processing resource.

One result did surprise us: in the one-target conditions only, outside the crowding range, we found that performance decreased with separation from the other pair of (untracked) objects. This unexpected cost of separation was only statistically significant in one experiment, but the trend was present in all four experiments that varied separation outside the crowding range. This might be explained by configural or group-based processing (Section 8), as grouping declines with distance (Kubovy, Holcombe, and Wagemans 1998).

5.2 The mechanisms that cause spatial interference

As explained in the beginning of this Section, one cause of short-range spatial interference is simply poor spatial resolution. If tracking cannot distinguish between two locations, either because of a noisy representation of those locations or because the two locations are treated as one, then a target may often be lost when it comes too close to a distractor. This would be true of any imperfect mechanism, biological or man-made. The particular way that the human visual system is put together, however, results in forms of spatial interference that do not occur in, for example, many computer algorithms engineered for object tracking.

Our visual processing architecture has a pyramid-like structure, with local, massively parallel processing at the retina, followed by a gradual convergence to neurons at higher stages with receptive fields responsive to large regions. Processes critical to tasks like tracking or face recognition rely on these higher stages. Face-selective neurons, for example, are situated in temporal cortex and have large receptive fields. For tracking, the parietal cortex is thought to be more important than the temporal cortex, but the neurons in these parietal areas also have large receptive fields.

A large receptive field can be a problem when the task is to recognize an object in clutter. Without a mechanism to prevent processing of the other objects that share the receptive field, object recognition would have access to only a mishmash of the objects’ features. Indeed, this indiscriminate combining of features is thought to be one reason for the phenomenon of illusory perceptual conjunctions of features from different objects (Anne Treisman and Schmidt 1982). For object tracking as well, isolating the target is necessary to keep it distinguished from the distractors.

In principle, our visual systems might include selection processes that when selecting a target can completely exclude distractors’ visual signals from reaching the larger receptive fields. Implementing such a system using realistic biological mechanisms with our pyramid architecture, however, is difficult (J. K. Tsotsos et al. 1995). Indeed, while the signals from stimuli irrelevant to the current task are suppressed to some extent, neural recordings reveal that they still have an effect on responses. The computer scientist John Tsotsos has championed surround suppression as a practical way for high-level areas of the brain to isolate a target stimulus. Such suppression likely involves descending connections from high-level areas and possibly recurrent processing (John K. Tsotsos et al. 2008). However, the evidence I have reviewed suggests that these effects are not spatially extensive enough to explain why we can only track a limited number of objects.

The possibility of a suppression zone specific to targets remains understudied, as very few studies of crowding have varied the number of targets. I have found one relevant study, which found that attending to additional gratings within the crowding range of a first grating resulted in greater impairment for identifying a letter (Mareschal, Morgan, and Solomon 2010). This is consistent with the existence of surround suppression around each target. Unfortunately, however, the study did not investigate how much further, if at all, spatial interference extended when there are more targets.

Although spatial interference in MOT does not extend very far, many MOT experiments involve targets and distractors coming very close to each other, so spatial interference likely contributes to many of the errors in a typical MOT experiment. As we have seen in this chapter, that may be largely a data limitation - something that occurs regardless of the number of targets, as a result of the inherent ambiguity regarding which is a target and which is a distractor during close encounters for any system with limited spatial resolution; when objects are kept widely separated, it appears that spatial interference plays little to no role in tracking.

Rather than spatial interference, then, something else is needed to explain the dramatic decline in tracking performance that can be found with more targets even in widely-spaced displays (Alex O. Holcombe, Chen, and Howe 2014; Alex O. Holcombe and Chen 2012; Alex O. Holcombe and Chen 2013). The processes underlying this capacity limitation can be described as “an attentional resource”, but that doesn’t tell us anything about how they work. To gain insight into the tracking processes, we would like to know what specific aspect(s) of tracking become impaired with higher target load. A major clue was provided by Alex O. Holcombe and Chen (2013), whose experiments revealed that temporal interference from distractors becomes much worse when there are more targets.

Temporal interference occurs when a target’s location is not sufficiently separated in time from a distractor visiting that location. That is, if distractors visit a location too soon before and after a target has visited that location, people are unable to track. The temporal separation needed increases steeply with the number of targets tracked, approximately linearly according to the evidence so far (Alex O. Holcombe and Chen 2013; Roudaia and Faubert 2017). This is easiest to explain by serial switching models (see Alex O. Holcombe (2022) for a review). In summary, spatial resolution is not affected much, if at all, by target load, but temporal resolution is. This is our fourth main conclusion about tracking, as was previewed in Section 1.1.